Our dependencies as open as possible (in setup.py) so users can install different versions of libraries Libraries usually keep their dependencies open, andĪpplications usually pin them, but we should do neither and both simultaneously. Installing it however might be sometimes trickyīecause Airflow is a bit of both a library and application. We publish Apache Airflow as apache-airflow package in PyPI. Note: If you're looking for documentation for the main branch (latest development branch): you can find it on s./airflow-docs.įor more information on Airflow Improvement Proposals (AIPs), visitĭocumentation for dependent projects like provider packages, Docker image, Helm Chart, you'll find it in the documentation index. Visit the official Airflow website documentation (latest stable release) for help with Is used in the Community managed DockerHub image is The only distro that is used in our CI tests and that You should only use Linux-based distros as "Production" execution environmentĪs this is the only environment that is supported. The work to add Windows support is tracked via #10388, but On Windows you can run it via WSL2 (Windows Subsystem for Linux 2) or via Linux Containers. Tested on fairly modern Linux Distros and recent versions of macOS. Note: Airflow currently can be run on POSIX-compliant Operating Systems. Using the latest stable version of SQLite for local development. Running multiple schedulers - please see the Scheduler docs. Note: MySQL 5.x versions are unable to or have limitations with ** Discontinued soon, not recommended for the new installation The rich user interface makes it easy to visualize pipelines running in production, monitor progress, and troubleshoot issues when needed. Rich command line utilities make performing complex surgeries on DAGs a snap. The Airflow scheduler executes your tasks on an array of workers while following the specified dependencies. Use Airflow to author workflows as directed acyclic graphs (DAGs) of tasks. When workflows are defined as code, they become more maintainable, versionable, testable, and collaborative. Backfilling allows you to (re-)run pipelines on historical data after making changes to your logic.Īnd the ability to rerun partial pipelines after resolving an error helps maximize efficiency.Apache Airflow (or simply Airflow) is a platform to programmatically author, schedule, and monitor workflows. Rich scheduling and execution semantics enable you to easily define complex pipelines, running at regular Tests can be written to validate functionalityĬomponents are extensible and you can build on a wide collection of existing components Workflows can be developed by multiple people simultaneously

Workflows can be stored in version control so that you can roll back to previous versions Workflows are defined as Python code which

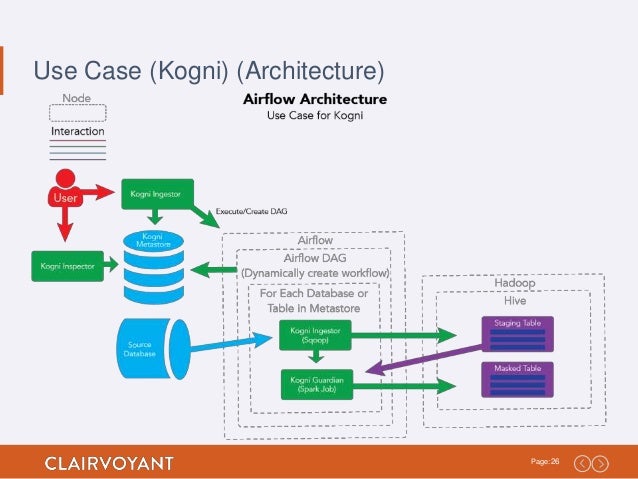

If you prefer coding over clicking, Airflow is the tool for you. Start and end, and run at regular intervals, they can be programmed as an Airflow DAG. Many technologies and is easily extensible to connect with a new technology. The Airflow framework contains operators to connect with Other views which allow you to deep dive into the state of your workflows.Īirflow™ is a batch workflow orchestration platform. These are two of the most used views in Airflow, but there are several The same structure can also beĮach column represents one DAG run. Of running a Spark job, moving data between two buckets, or sending an email. This example demonstrates a simple Bash and Python script, but these tasks can run any arbitrary code. Of the “demo” DAG is visible in the web interface: > between the tasks defines a dependency and controls in which order the tasks will be executedĪirflow evaluates this script and executes the tasks at the set interval and in the defined order. Two tasks, a BashOperator running a Bash script and a Python function defined using the decorator A DAG is Airflow’s representation of a workflow. From datetime import datetime from airflow import DAG from corators import task from import BashOperator # A DAG represents a workflow, a collection of tasks with DAG ( dag_id = "demo", start_date = datetime ( 2022, 1, 1 ), schedule = "0 0 * * *" ) as dag : # Tasks are represented as operators hello = BashOperator ( task_id = "hello", bash_command = "echo hello" ) () def airflow (): print ( "airflow" ) # Set dependencies between tasks hello > airflow ()Ī DAG named “demo”, starting on Jan 1st 2022 and running once a day.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed